From the proliferation of smartphones with a built-in camera, to the increasing use of security cameras in public spaces, we are all on camera, more than ever before. And with an increase in images comes an increase in poorly-shot photos. Unfortunately, most of us are not professional photographers, and oftentimes, extraneous distractions can get in the way of the perfect shot. In both consumer photography and in outdoor surveillance systems, dirt, rain, and accidental scratches can obscure an image, and degrade image quality.

But data science is here to rescue amateur photographers and security apparatuses. In a 2013 paper titled, “Restoring An Image Taken Through a Window Covered with Dirt or Rain” Rob Fergus, from the Center for Data Science, and two other researchers from NYU, David Eigen and Dilip Krishnan, explored the possibility of using convolutional neural networks to clean up images that have been obscured by foreign debris.

Neural networks learn to perform a function by being shown previous examples of that function being performed. So to train a neural network to repair corrupted images, Eigen, Krishnan, and Fergus collected a dataset of repaired images and their dirty counterparts. The network they created implicitly learned how to recognize the parts of an image that were obscuring the intended subject. The trio wrote in their paper, “By asking the network to produce a clean output, regardless of the corruption level of the input, it implicitly must both detect the corruption and, if present, in-paint over it.”

The following images show how the neural network was able to remove dirt particles from a photograph. The top row contains the original image, while the bottom row shows the network’s image output. The first image contained no corruption, and was left alone by the network. The second image was tarnished by synthetic dirt, and the network was able to clean this image because it had seen previous examples of images that had been cleaned. The third and fourth images featured different kinds of visual noise, and the network ignored these blemishes, which proved that the network learned how to specifically detect dirt, as opposed to detecting any kind of visual noise.

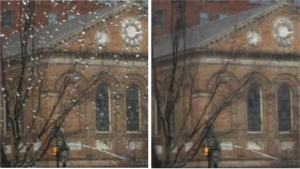

And the following images show how the neural network was able to remove water droplets from a photograph.

The network’s limitations were stretched by large foreign objects. For dirt, large corruption, and oddly-shaped or unusually colored corruption was not detected. And similarly, when water droplets began to merge, they became too large for the network to process. The researchers wrote that such cases could be addressed in future work, with more varied images used in the training stage, which would allow the network to recognize a wide variety of cases.

Eigen, Krishnan, and Fergus concluded their paper saying that the project provided an underlying foundation for a wide range of applications beyond simple image restoration. They even speculated about the possibility of the system’s real-time uses, in situations such as, “…a digital car windshield to aid driving in adverse weather conditions, or enhancement of footage from security or automotive cameras in exposed locations.”

In an age where everyone can taking a picture, we are all one step closer to shooting a decent photograph.